Conclusion

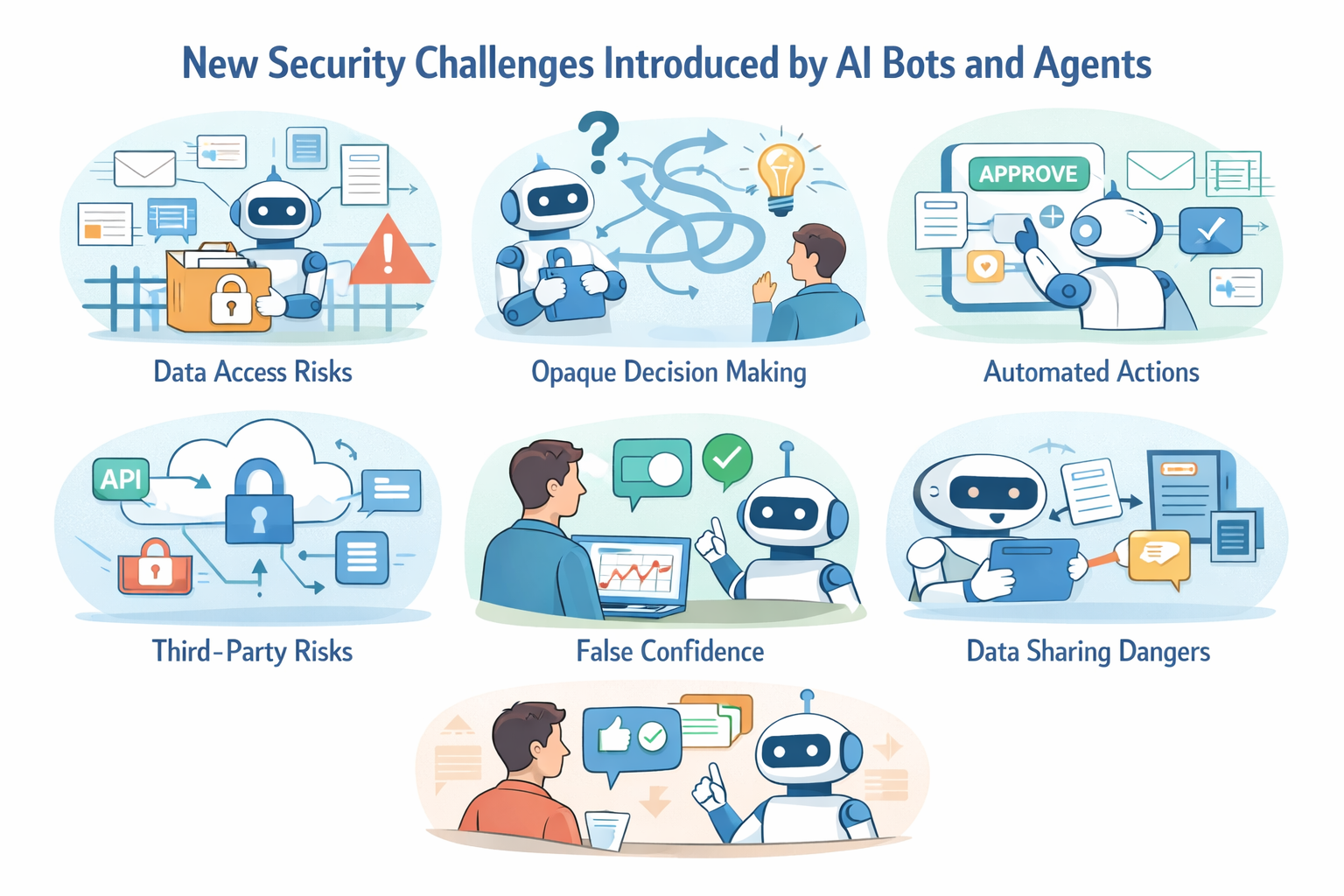

AI bots and agents can deliver real business value, but that value only scales when governance, visibility, and control scale with it. For enterprise leaders, the priority is not to slow adoption. It is to introduce AI in a way that strengthens security discipline rather than exposing long-standing weaknesses at greater speed.

A practical path forward is to start with focused, lower-risk use cases and expand only when governance proves effective:

- pick one domain such as operations, service management, or internal support

- expose a small set of approved enterprise data sources and read-only actions behind strong identity controls and audit logging

- define measurable outcomes such as faster triage, fewer manual handoffs, better response quality, or reduced context-gathering effort

- expand to bounded write actions only after approval rules, observability, and accountability are working as intended

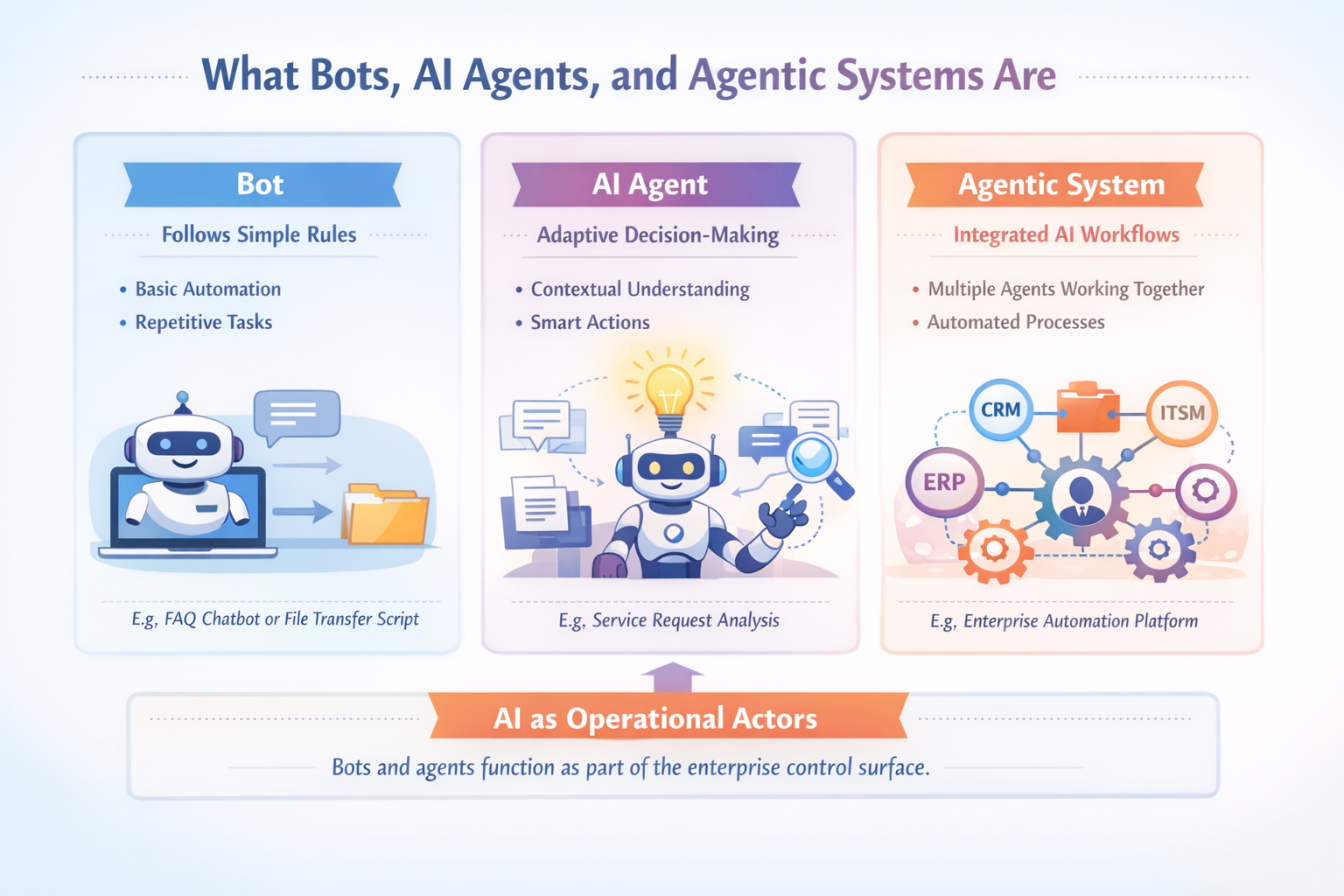

The value proposition is simple: use AI to improve speed and decision support without weakening oversight. That means treating bots and agents as operational actors, applying least-privilege access, strengthening observability, defining approval boundaries, and ensuring that vendor and platform controls meet enterprise expectations.

This is where FAMRO LLC can help. Based in the UAE, FAMRO LLC brings deep AI/ML and enterprise IT experience with a proven delivery track record. Our teams have worked hands-on with AI and machine learning systems since 2018, supporting organizations from early experimentation to production-scale deployment. We help enterprises move from AI ambition to controlled, enterprise-ready execution.

We support secure AI adoption end-to-end, including:

1. AI readiness and risk assessment: identify high-value use cases, evaluate access exposure, and define realistic rollout priorities

2. Secure architecture and implementation: design AI-enabled workflows with least-privilege access, bounded actions, and clear system ownership

3. Identity, authorization, and approval design: apply enterprise IAM patterns, role-based access, and approval checkpoints for higher-impact actions

4. Observability and operational controls: establish logging, monitoring, traceability, and review processes so AI activity is visible and accountable

5. Governance and policy framework: define approved tools, data handling rules, third-party review standards, and operating models that support security and compliance expectations

6. Delivery acceleration: help teams move from pilot to production with a phased, governed approach that supports innovation without losing control

To help organizations get started, we offer a free initial consultation focused on your AI environment, security posture, and enterprise adoption priorities.

If your organization is investing in AI bots and agents and wants confidence, accountability, and control built in from the start, now is the time to act.

🌐 Learn more: Visit Our Homepage

💬 WhatsApp: +971-505-208-240